Large Language Models

A philosophical observatory

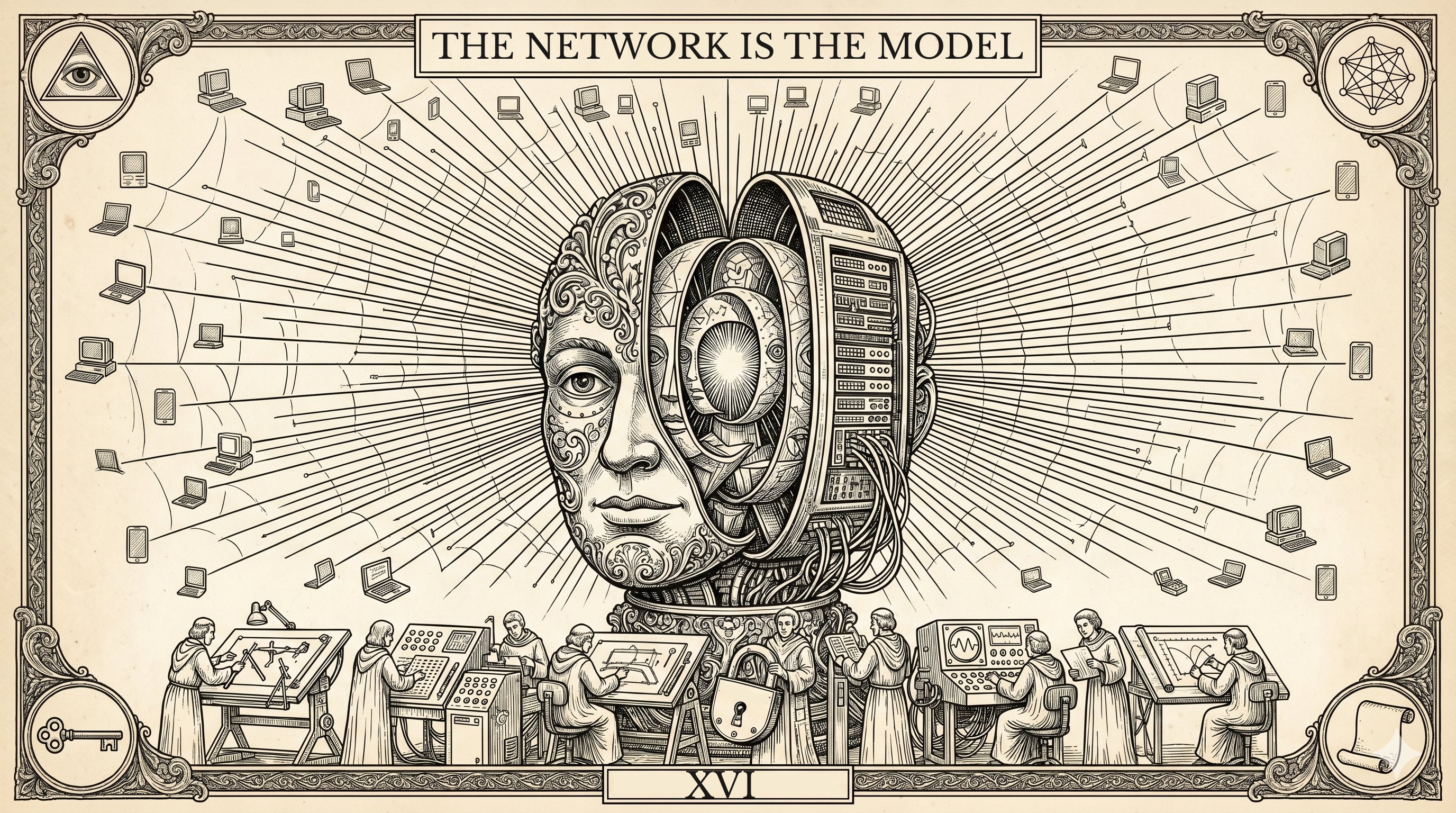

Plate XVI — The Network Is the Model

"A writer should not reduce the world's complexity to a collection of simple answers, but should show that complexity in all its dark, inexhaustible depth."Stanisław Lem, 1921–2006